As artificial intelligence becomes deeply embedded in business workflows, education, marketing, software development, and customer experience, the craft of writing effective prompts has evolved into a discipline of its own. Organizations are no longer experimenting casually with AI—they are building repeatable systems around it. This shift has created demand for structured environments where prompts can be created, tested, optimized, versioned, and shared across teams. Prompt engineering platforms answer that need by turning ad-hoc experimentation into a managed, measurable process.

TLDR: Prompt engineering platforms help teams design, test, optimize, and manage AI prompts in a structured way. They provide tools for version control, collaboration, analytics, A/B testing, and performance monitoring. These platforms reduce guesswork, improve output quality, and make AI systems more reliable at scale. As organizations increasingly depend on AI, structured prompt management becomes essential rather than optional.

The Rise of Structured Prompt Management

In the early days of generative AI, most prompt creation happened inside chat windows. A user would type an instruction, tweak it repeatedly, and copy the best version elsewhere. While effective for individual exploration, this approach becomes problematic in team environments.

Common challenges include:

- No version control – Teams cannot track which prompt variation produced the best results.

- Lack of reproducibility – Results vary without documentation of model settings and parameters.

- Collaboration gaps – Prompt knowledge remains siloed with individual users.

- No systematic testing – Performance improvements rely on guesswork rather than structured experiments.

Prompt engineering platforms bring discipline to the process. They treat prompts more like software assets—objects that can be tested, refined, reviewed, and deployed within structured systems.

Core Features of Prompt Engineering Platforms

Most modern prompt management tools offer a combination of development, experimentation, and monitoring functionality. While capabilities vary, several core features define the category.

1. Prompt Version Control

Version control allows teams to track changes to prompts over time. Just as developers track code revisions, prompt engineers can compare iterations and analyze how changes affect outputs.

This typically includes:

- Revision history logs

- Named versions and labeling

- Rollback functionality

- Commenting and documentation

This structure eliminates confusion over which version is currently live in production systems.

2. A/B Testing and Experimentation

One of the most powerful features in prompt engineering platforms is structured experimentation. Teams can run side-by-side comparisons of prompt variations to evaluate quality, tone, accuracy, or other metrics.

Testing frameworks often allow:

- Controlled variable adjustments

- Batch testing across datasets

- Human or automated evaluation scoring

- Side-by-side output comparison

This systematic approach replaces intuition with measurable improvement.

3. Parameter Configuration Management

Prompts rarely function alone—they operate alongside model parameters such as temperature, token limits, and system instructions. Prompt platforms centralize control over these configuration variables.

This ensures output stability and makes experiments reproducible across environments.

4. Collaboration and Access Control

Enterprise environments require permission systems. Prompt management platforms provide:

- Role-based access

- Shared prompt libraries

- Team workspaces

- Commenting and approval workflows

This transforms prompts from individual experiments into shared organizational assets.

Why Businesses Need Prompt Engineering Platforms

As AI outputs increasingly influence public-facing materials—customer support scripts, marketing emails, knowledge base articles, product descriptions—the cost of inconsistency rises. A poorly optimized prompt can generate:

- Inaccurate answers

- Brand voice inconsistencies

- Compliance risks

- Customer dissatisfaction

Structured prompt management mitigates these risks by introducing repeatability and oversight.

Prompt engineering platforms are especially valuable in sectors such as:

- Ecommerce – Automating product description generation

- Customer Support – Powering AI chat systems

- Software Development – Assisting code generation

- Healthcare and Legal – Drafting sensitive documentation

- Marketing Agencies – Producing brand-aligned content at scale

In these settings, performance consistency is more important than creative experimentation.

Testing Frameworks and Evaluation Methods

Testing prompts is not straightforward because outputs are qualitative rather than purely numeric. Advanced platforms address this through hybrid evaluation techniques.

Human-in-the-Loop Evaluation

Users manually rate responses according to criteria such as:

- Accuracy

- Relevance

- Tone alignment

- Clarity

- Creativity

Scores are aggregated to compare prompt variants.

Automated Scoring Systems

Some systems integrate model-based evaluation tools that assess outputs programmatically. These may estimate:

- Semantic similarity

- Instruction adherence

- Sentiment accuracy

- Hallucination likelihood

Although not perfect, automation accelerates large-scale testing.

Dataset Testing Environments

Advanced platforms allow prompts to run against predefined testing datasets. This simulates real-world queries at scale and reveals weaknesses before deployment.

Such pre-deployment validation is especially critical for high-volume AI systems.

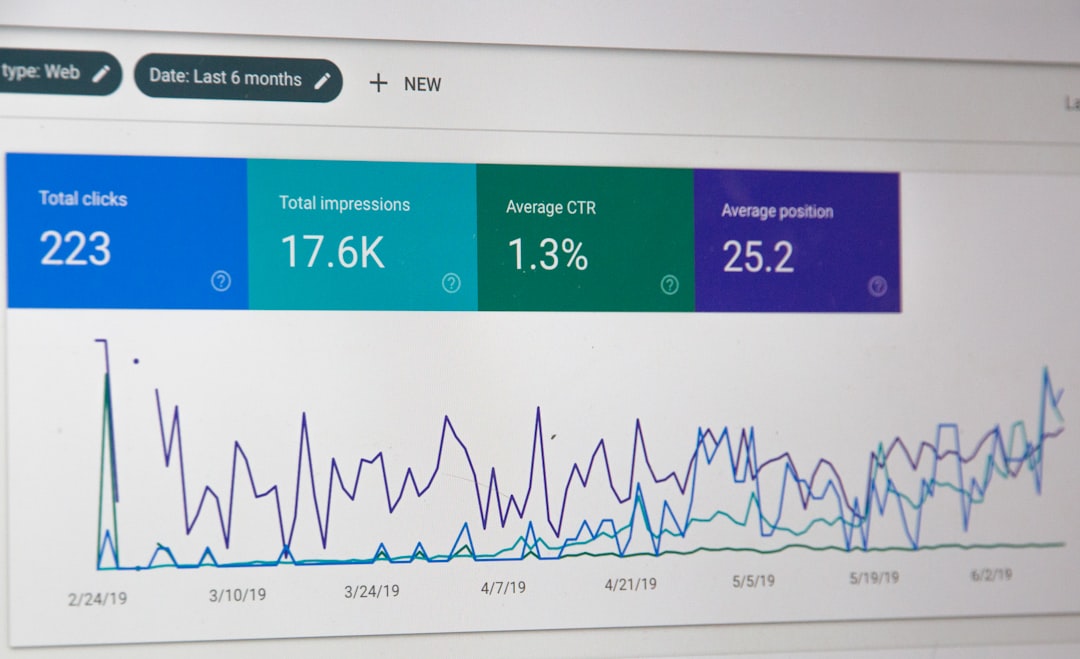

Analytics and Monitoring in Production

Managing prompts does not stop at deployment. Ongoing monitoring is essential for optimization.

Prompt engineering platforms often include dashboards that track:

- Response success rate

- Error frequency

- Token usage and cost

- User engagement metrics

- Output quality ratings

This visibility helps organizations refine prompts continuously rather than treating them as static configurations.

Integration with Development Workflows

AI functionality increasingly integrates directly into software products through APIs. Prompt management platforms support this integration with:

- API deployment pipelines

- Environment separation (development, staging, production)

- Webhook triggers

- SDK integrations

This bridges the gap between prompt design and live application deployment. Instead of copying prompts into raw code, teams can synchronize versions dynamically.

Governance, Compliance, and Documentation

In regulated industries, AI systems require transparency. Prompt engineering platforms improve governance through:

- Audit trails

- Usage logs

- Access tracking

- Documentation storage

These features help businesses demonstrate responsible AI usage. They also allow quick investigation if outputs deviate from policy guidelines.

Scaling Prompt Libraries

As organizations expand AI systems, they often accumulate hundreds—or thousands—of prompt variations. Without categorization, this becomes unmanageable.

Structured libraries typically include:

- Tagging systems

- Search and filtering

- Folder hierarchies

- Usage metadata

This organization ensures valuable prompt knowledge is not lost or duplicated.

Common Types of Prompt Engineering Platforms

The ecosystem can generally be divided into categories:

- Developer-Centric Platforms – Focused on API integration and code deployment.

- Enterprise Collaboration Platforms – Emphasizing governance and analytics.

- No-Code Experimentation Tools – Designed for marketers and content teams.

- Open Source Frameworks – Allowing customizable testing environments.

Each organization must evaluate which type aligns with its technical maturity and operational scale.

The Future of Prompt Engineering Platforms

As foundation models become more capable, prompts will still play a central role. However, the interaction may evolve toward:

- Modular prompt components

- Dynamic context injection

- Automated prompt optimization

- AI-assisted prompt rewriting

- Self-evaluating systems

Prompt platforms are likely to incorporate meta-learning systems that automatically refine prompts based on observed output performance.

Rather than eliminating prompt engineering, advanced AI models may amplify the need for structured oversight and systematic management.

Conclusion

Prompt engineering platforms represent a maturing stage in AI adoption. What began as informal experimentation is evolving into structured engineering practice. By enabling version control, testing frameworks, collaboration systems, analytics, governance, and integration workflows, these platforms help organizations move from creative exploration to operational reliability.

As AI becomes mission-critical in more industries, prompt management will no longer be optional. It will become infrastructure—essential for ensuring quality, safety, consistency, and measurable improvement at scale.

Frequently Asked Questions (FAQ)

1. What is a prompt engineering platform?

A prompt engineering platform is a tool or system that allows users to create, test, manage, version, and deploy AI prompts in a structured way. It provides collaboration tools, analytics, and testing capabilities beyond simple chat interfaces.

2. Why is version control important for prompts?

Version control ensures teams can track changes, compare iterations, and roll back to earlier versions if needed. This prevents confusion and improves reproducibility of AI outputs.

3. Can small teams benefit from prompt management tools?

Yes. Even small teams benefit from organized prompt libraries and testing workflows, especially when AI outputs are client-facing or revenue-generating.

4. How do prompt platforms evaluate output quality?

They use a mix of human review scoring and automated evaluation tools. Some platforms also allow testing against structured datasets to simulate real-world usage.

5. Are prompt engineering platforms only for developers?

No. Many platforms are designed for non-technical users such as marketers, writers, and operations teams, offering no-code environments and visual dashboards.

6. Do these platforms reduce AI hallucinations?

While they cannot eliminate hallucinations entirely, structured testing, monitoring, and controlled parameter management significantly reduce the risk of unpredictable outputs.

7. What should organizations look for when choosing a platform?

Key considerations include version control capabilities, collaboration features, analytics depth, integration options, ease of use, compliance tools, and support for the desired AI models.